DeepSeek seeks first external funding to retain talent with stock options

The AMW Read

DeepSeek's first external funding overturns a core claim in §4 case study (Deliberate 5): Liang refused outside investors. Novelty 3 resolves a firm stance. Significance 2: talent war in CN foundation models is segment-level, not cross-segment.

DeepSeek seeks first external funding to retain talent with stock options

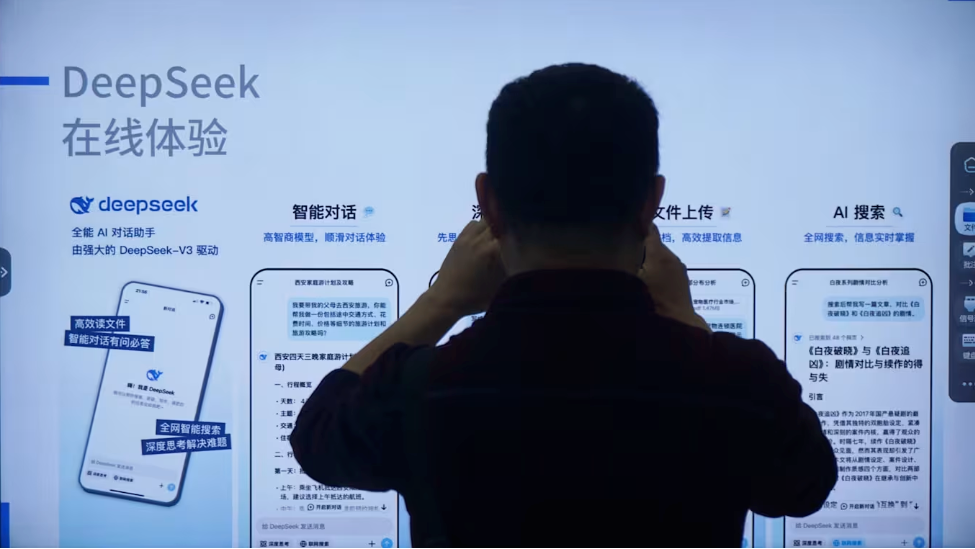

DeepSeek, the Chinese AI lab behind the R1 reasoning model that shook global markets in early 2025, is pursuing its first external equity raise. According to the Financial Times, the company is in talks with a small group of strategic investors for a round valuing the firm at over $200 billion. The amount is described as “symbolic”—in the low single-digit billions—far smaller than typical mega-rounds from peers. The primary goal is to establish a valuation to underwrite stock options for researchers, several of whom have already left for competitors like ByteDance and Tencent.

This capital move is a tactical response to a deepening talent war in Chinese AI, not a sign of financial distress. DeepSeek founder Liang Wenfeng has long funded the lab from his quantitative-trading profits and resisted outside capital, prioritizing research over commercialization. The lab’s lack of a clear business model and Liang’s reluctance to share detailed financials complicate the fundraising. By setting a valuation through a modest round, stock repurchases, or performance-based metrics, DeepSeek hopes to offer equity incentives comparable to rivals—MiniMax at $34B, Zhipu AI at $58B—without ceding control or shifting its research-first ethos.

The episode surfaces a common tension in AI: talent retention often forces capital structure changes even at cash-rich labs. For the broader industry, it underscores how the fastest-ARR-ramp pattern in foundation models is now colliding with the need for valuation clarity. If DeepSeek succeeds, it may retain its core team while preserving its unique, idealistic culture. If not, attrition could hollow out one of China’s most innovative AI labs, with implications for the balance of power in open-weight reasoning models.

%20language%20model%20with%201.6%20trillion%20total%20parameters%20and%2049%20billion%20activated%20parameters.%20It%20features%20a%20hybrid%20attention%20architecture%20combining%20Compressed%20Sparse%20Attention%20(CSA)%20and%20Heavily%20Compressed%20Attention%20(HCA)%2C%20achieving%202...&logoUrl=https%3A%2F%2Ffiles.readme.io%2F9294124135914fb6f7626bb3920389713ffaefcb0df8c379cc098cf03ed6796e-small-NVIDIA_Logo_For_LightBG.png&color=%23000000&variant=light)