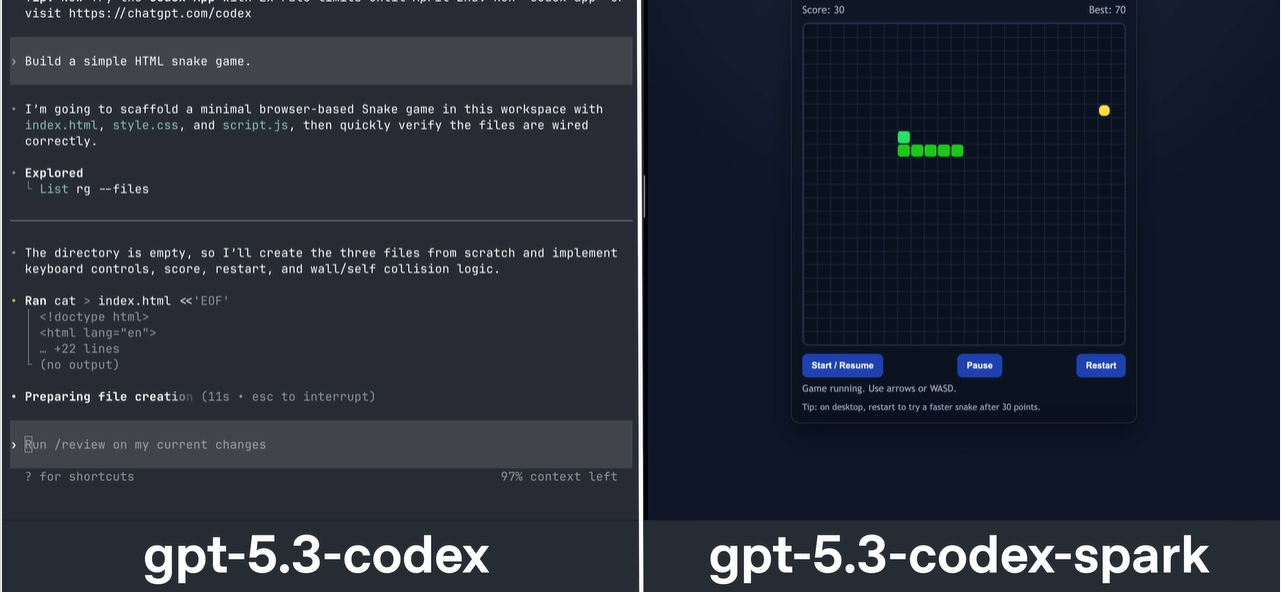

OpenAI unveiled GPT-5.3-Codex-Spark, delivering over 1,000 tokens per second for real-time coding vi...

The AMW Read

Updates the OpenAI case study by demonstrating a high-speed inference breakthrough specifically optimized for the developer inner loop using specialized Cerebras hardware.

NoveltySignificance

AI Coding · Case StudiesSilicon Substrate

OpenAI unveiled GPT-5.3-Codex-Spark, delivering over 1,000 tokens per second for real-time coding via Cerebras WSE-3 hardware. This architecture is 15x faster than predecessors, cutting roundtrip overhead by 80% and time-to-first-token by 50% for near-instant feedback. This milestone transitions AI from a reactive tool to a fluid, real-time collaborator in professional IDEs. Systemically, it signals a shift toward sub-second, hardware-accelerated interactive engineering loops. 🚀