Anthropic's Cybersecurity Model 'Claude Mythos Preview' Aims to Repair Government Ties.

The AMW Read

Updates the Anthropic case study (§4) by showing a strategic pivot toward defensive government use-cases to resolve geopolitical and safety-related friction (cross.§E, cross.§G).

Anthropic's Cybersecurity Model 'Claude Mythos Preview' Aims to Repair Government Ties.

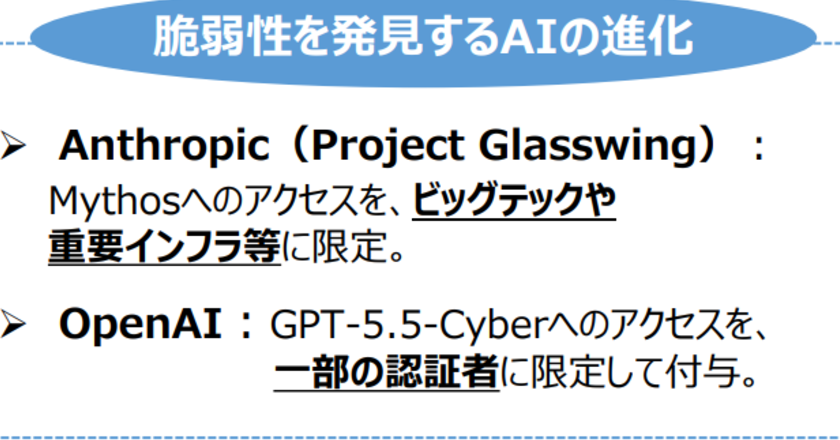

Anthropic has launched a new, powerful cybersecurity-focused AI model called Claude Mythos Preview, which it is privately offering to major corporations like Apple, Nvidia, and JPMorgan Chase. This follows a public dispute with the Trump administration, which had labeled Anthropic a national security risk after the company refused to allow its technology to be used for domestic mass surveillance or lethal autonomous weapons. The company's recent efforts, including briefing senior U.S. government officials on Mythos and CEO Dario Amodei's reported White House meeting, signal an attempt to mend the relationship. Anthropic has also reportedly hired the lobbying firm Ballard Partners, which is linked to Trump.

The situation highlights the critical and volatile intersection of advanced AI development and government regulation, particularly in national security. For the AI market, it underscores how a company's strategic positioning on ethical red lines can directly impact its access to significant government contracts and partnerships. Anthropic's past status as the first company with models cleared for classified military networks demonstrates the high stakes of this sector. The release of Mythos, sparking emergency meetings between bank leaders and the Federal Reserve, also shows the immediate enterprise value placed on AI for critical infrastructure security, influencing adoption cycles and competitive dynamics among model providers.

From an analytical perspective, this represents a pragmatic pivot by Anthropic to leverage a high-demand technical capability—cybersecurity—as a diplomatic tool to restore a key revenue and influence channel. The move is less about a fundamental shift in the company's stated ethical principles and more a demonstration of offering a product aligned with defensive government interests. The engagement with a Trump-linked lobbying firm and high-level White House access suggests a calculated strategy to navigate political headwinds. The market takeaway is that in the current geopolitical climate, AI labs must balance principled stances with tangible, strategic offerings that serve core government operational needs to maintain their market position and growth trajectories.