DeepSeek-V4 Preview Model Launches with 1M Token Context Window, Open-Sourced

The AMW Read

Incremental update to a known player (DeepSeek) but the release advances the open-weight trend with segment-level significance for coding and long-context capabilities.

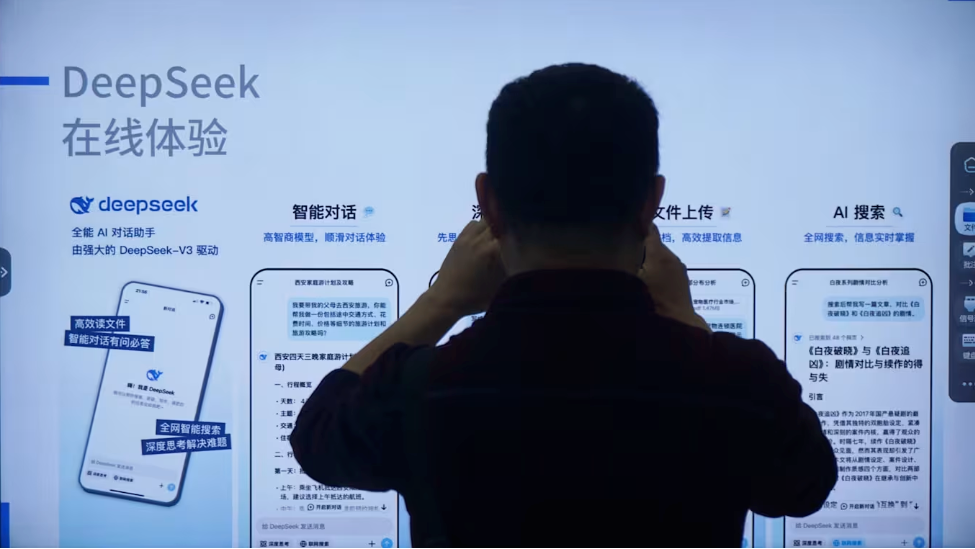

DeepSeek-V4 Preview Model Launches with 1M Token Context Window, Open-Sourced

DeepSeek announced the launch of its DeepSeek-V4 preview model on April 24, 2025. The new series, available in Pro and Flash variants, supports a 1 million token context window and is released as open-source. The Pro version claims performance comparable to top-tier closed-source models and achieves state-of-the-art results among open models in Agentic Coding benchmarks. DeepSeek-V4 introduces a novel attention mechanism and DSA sparse attention to reduce compute and memory requirements. The API service is already updated to support the new models.

Why it matters: This release exemplifies the accelerating open-weight model race, where Chinese AI labs are aggressively open-sourcing frontier-capability models to gain distribution and community adoption. DeepSeek's move intensifies the hyperscaler-distribution pattern, as open-source models become competitive with closed-source alternatives, potentially compressing margins for proprietary API providers. The 1M context window, previously a differentiator for Google Gemini and some Chinese peers, is now becoming a standard expectation, further commoditizing long-context capability.

Grounded expert take: DeepSeek is executing a deliberate strategy of releasing high-performance open-weight models to capture developer mindshare and enterprise deployments, especially in coding and agentic workflows. By optimizing for tools like Claude Code and OpenClaw, DeepSeek positions itself as a viable alternative to both closed-source and other open-source models. This release deepens the capital-compression arc for foundation model labs that rely on API revenue, as open models erode pricing power. However, the true competitive moat may shift to fine-tuning, serving infrastructure, and enterprise support rather than raw model performance.

#DeepSeek #OpenSource #FoundationModel #AICoding #1MContext #OpenWeight #ChinaAI