DeepSeek V4 Preview: 1.6 Trillion Parameters, Open-Weight Challenge to Frontier Labs

The AMW Read

Novelty 2: DeepSeek is a known player, but V4 represents a major technical iteration with full stack migration to domestic hardware. Significance 3: Cross-segment impact via cost collapse, sovereignty signaling, and open-weight pressure on frontier labs.

DeepSeek V4 Preview: 1.6 Trillion Parameters, Open-Weight Challenge to Frontier Labs

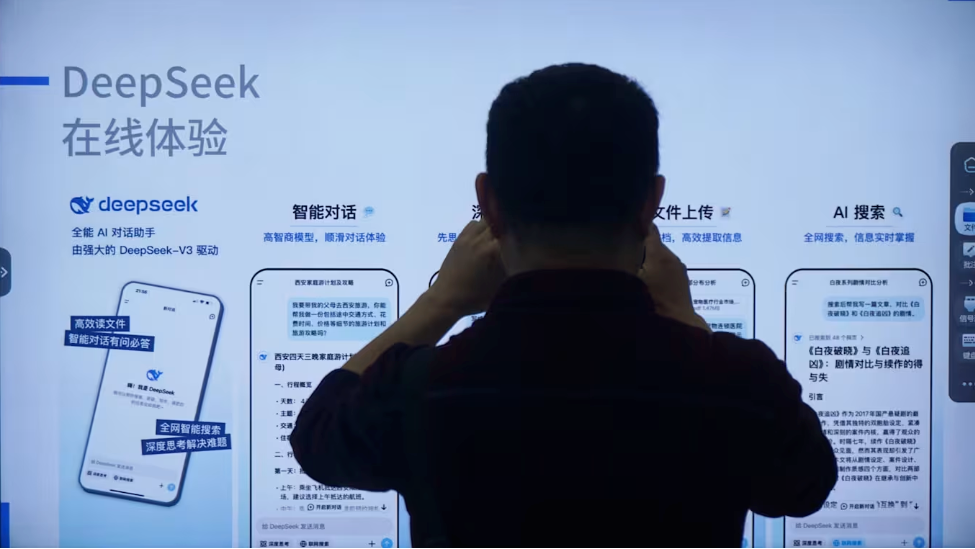

DeepSeek has released the preview of its V4 model, a 1.6 trillion-parameter mixture-of-experts architecture trained on 32-33 trillion tokens, supporting a 1 million-token context window by default across both Pro and Flash tiers. The model marks the company's first flagship iteration since R1 in January 2025, after a 15-month development cycle that involved migrating training from Nvidia CUDA to Huawei Ascend chips. DeepSeek V4 is available as open-weight under a permissive license, with API pricing starting at ¥0.2 per million tokens for Flash cache hits. The model achieves inference FLOP reduction of 73% and KV cache memory reduction of 90% versus its predecessor, according to the technical report.

This launch updates the ongoing open-weight vs. closed-source debate within the foundation-model competitive landscape. DeepSeek's strategy of releasing frontier-capability models at dramatically lower cost — now paired with native Chinese silicon adaptation — directly challenges the capital-intensive scaling narrative of US frontier labs. The V4 preview arrives alongside reports that DeepSeek is seeking its first external funding round at a valuation exceeding $20 billion, after founder Liang Wenfeng's long-standing refusal of outside capital. The company faces headwinds including high hallucination rates (94-96%), absence of multimodal capabilities, and ongoing researcher attrition to competitors like ByteDance and Xiaomi.

The combination of open-weight licensing with aggressive API pricing exemplifies the 'price-squeeze' pattern that has compressed margins across the model layer. By demonstrating that a purely open-weight lab can achieve near-frontier performance on domestic hardware, DeepSeek provides concrete evidence for the China challenger frame in the foundation-model open debate — namely that sovereign-AI pressure and algorithmic efficiency can substitute for unrestricted compute access. Industry observers note that V4's long-context capability and agent-optimized architecture position it as infrastructure for enterprise and government deployments requiring data sovereignty.